Merkle Trees: Detecting Inconsistencies Without Comparing Everything

Distributed systems must constantly deal with the challenge of data consistency across replicas. When data is replicated across multiple nodes, divergence can occur due to network partitions, concurrent writes, or node failures. Detecting and repairing these inconsistencies efficiently is therefore a fundamental requirement in large-scale distributed databases and peer-to-peer systems.

In theory, keeping replicas consistent sounds simple: just compare the data and fix whatever is different. In practice, this idea collapses the moment your dataset grows beyond a few gigabytes. Or even a few megabytes, if your cluster is large enough. Distributed systems tend to turn simple ideas into very expensive problems.

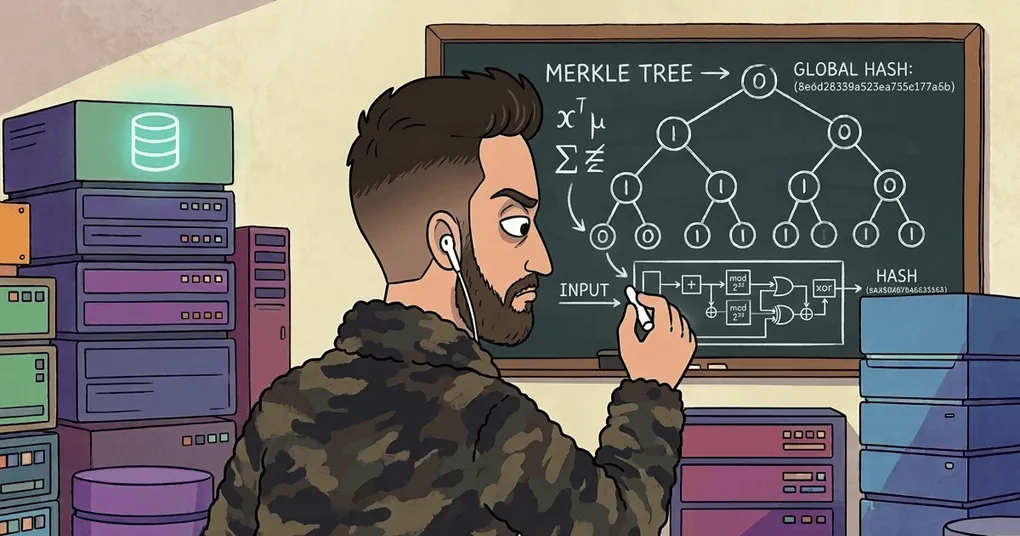

One of the most widely used mechanisms to address this problem is the Merkle Tree, a hierarchical hashing structure that enables efficient detection of inconsistencies across large datasets.

Instead of comparing entire datasets, systems compare compact cryptographic summaries of the data. And that turns a potentially massive synchronization problem into something surprisingly manageable.

This article explores how Merkle Trees work and why they are a fundamental building block in distributed systems.

Limitations of Read Repair

Many distributed databases rely on read repair mechanisms to correct inconsistencies between replicas. In this approach, when a client reads data from multiple replicas, any detected divergence is repaired immediately.

This works well—as long as the data is actually read.

And that’s the catch. If a piece of data is never read, it may stay inconsistent forever. Which is great if you enjoy Schrödinger’s data consistency.

Read repair only fixes data that someone asks for. If a portion of the dataset is never queried, inconsistencies can remain hidden indefinitely.

Ensuring full replica consistency would therefore require periodically comparing entire datasets across nodes.

A naive solution would involve:

- comparing all rows between replicas

- exchanging entire datasets

- performing pairwise record comparisons

Which is a perfectly good strategy if your database contains twelve rows and runs on a laptop.

For large-scale systems, however, this approach becomes computationally and network-wise impractical.

To reduce this cost, many distributed databases adopt Merkle Trees as part of their anti-entropy mechanisms.

Instead of comparing raw data, nodes compare hash trees summarizing that data.

Much smaller. Much faster. Much less painful.

What Is a Merkle Tree?

A Merkle Tree (or hash tree) is a tree data structure where:

- Leaf nodes contain the cryptographic hash of a data block

- Internal nodes contain the hash of the concatenation of their children

This structure provides a compact representation of large datasets and allows efficient verification of data integrity.

The concept was introduced and patented by Ralph Merkle in 1979, long before blockchains made the name fashionable.

The key property of the structure is that any modification in the data propagates upward and changes the root hash, often called the Merkle Root.

In other words, the root becomes a cryptographic fingerprint of the entire dataset.

Change one byte anywhere in the data, and the fingerprint changes. Which is convenient. Because otherwise debugging distributed systems would somehow be even worse.

Which is exactly what we want.

Structure and Construction

Merkle Root

H(ABCD)

/ \

/ \

H(AB) H(CD)

/ \ / \

H(A) H(B) H(C) H(D)

| | | |

A B C DMerkle Trees represent local data through a hierarchical structure of hashes.

The construction process proceeds as follows.

1. Leaf Level

The system scans the entire dataset and computes a cryptographic hash for each data block or record range.

These hashes form the leaf nodes of the tree.

2. Internal Nodes

Each internal node stores the hash of the concatenation of its child nodes: .

3. Recursive Aggregation

This process is repeated recursively until a single hash is produced at the top of the tree.

This final value is the Merkle Root, which represents the integrity of the entire dataset.

Although many implementations use binary trees, the structure may also allow more than two children per node.

Cryptographic hash functions such as SHA-2 are typically used.

Detecting Replica Divergence

Merkle Trees enable efficient replica comparison in distributed databases.

Instead of comparing entire datasets, replicas only need to compare their Merkle roots.

The process works as follows:

- Two replicas compute their local Merkle Trees.

- The root hashes are exchanged and compared.

Two outcomes are possible:

- Roots are equal → the replicas are consistent.

- Roots differ → inconsistencies exist somewhere in the dataset.

If the roots differ, the system recursively compares the child nodes of the tree.

This top-down comparison progressively narrows the search space until the specific data ranges that diverge are identified.

Once the inconsistent ranges are located, only those records need to be repaired.

Which means that instead of shipping gigabytes of data across the network, nodes only exchange the parts that actually differ.

Distributed systems engineers call this efficiency.

Network engineers call it not destroying the cluster. Which, as it turns out, is generally considered a desirable property in production systems.

Replica Comparison with Merkle Trees

Replica A Replica B

--------- ---------

Root Hash A Root Hash B

h0 h0'

/ \ / \

h1 h2 h1 h2'

/ \ / \ / \ / \

h3 h4 h5 h6 h3 h4 h5' h6

| | | | | | | |

D1 D2 D3 D4 D1 D2 D3* D4Interpretation:

Step 1: Compare root hashes

h0 != h0' → replicas diverge

Step 2: Compare child nodes

h1 == h1 → left subtree is identical

h2 != h2' → inconsistency in the right subtree

Step 3: Drill down

h5 != h5' → divergence located in data range D3The comparison starts at the root and moves downward only when hashes differ. If two nodes share the same hash, the entire subtree below them is guaranteed to be identical and does not need to be inspected further.

This property allows distributed databases to quickly isolate inconsistent data ranges without exchanging the entire dataset.

Cost and Trade-offs

Merkle Trees provide an efficient mechanism for detecting inconsistencies, but they introduce several trade-offs.

Update Cost

Because the tree is built bottom-up, any modification to a data block requires recomputing all hashes along the path from the leaf to the root.

This means that updates propagate upward through the tree. Fortunately, the number of nodes involved grows only logarithmically with the dataset size.

Tree Size vs Precision

There is also a trade-off between:

- Tree size

- Precision of inconsistency detection

A larger tree contains smaller data ranges per leaf node, which allows more precise identification of divergent records.

However, larger trees also increase:

- communication overhead during comparisons

- computational costs during updates

Distributed databases must therefore balance precision and efficiency when designing their Merkle Tree structures.

As usual in distributed systems, the answer is not “what is best”, but “what is best given your constraints”. Or, more realistically, what is best given your constraints, deadlines, and the vague hope that nothing catches fire at 3 a.m.

Efficient Data Integrity Verification

Merkle Trees are particularly useful for verifying the integrity of large datasets.

A fundamental property is that verifying the inclusion of a leaf node in a tree requires only logarithmic work.

If a tree contains leaves, verifying membership requires computing approximately: hash operations.

This is significantly more efficient than linear structures such as hash lists, where verification requires a number of operations proportional to the total number of elements.

This efficiency makes Merkle Trees highly suitable for large-scale distributed environments.

Merkle Proofs

A key feature of Merkle Trees is the ability to generate Merkle proofs.

A Merkle proof allows a system to demonstrate that a specific data element belongs to a dataset represented by a particular Merkle root, without revealing the entire dataset. The proof consists of the minimal set of sibling hashes needed to reconstruct the path from the leaf to the root.

Merkle Root

H(ABCD)

/ \

/ \

H(AB) H(CD)

/ \ / \

H(A) H(B) H(C) H(D)

|

CThe important detail is that the verifier does not need the entire tree.

It only needs:

- the data element

- the Merkle root

- a small number of sibling hashes along the path to the root

Proof for Element C

To prove that C belongs to the dataset, we only need the hashes along the path to the root:

Merkle Proof = {H(D), H(AB)}Verification process:

1. hC = hash(C)

2. hCD = hash(hC + H(D))

3. root' = hash(H(AB) + hCD)

4. Check:

root' == Merkle RootIf the hashes match, the element C is guaranteed to belong to the dataset represented by the Merkle root.

The verification process works by:

- hashing the data element

- combining it with the provided sibling hashes

- iteratively recomputing parent hashes up to the root

If the resulting hash matches the known Merkle root, the data is verified as authentic. The size of the proof grows only logarithmically with the size of the dataset: . This makes Merkle proofs extremely efficient for verifying data stored remotely.

In other words, you can prove that a piece of data belongs to a massive dataset without downloading the entire thing.

Which is always nice.

Applications in Distributed Systems

Merkle Trees are widely used across distributed systems where data integrity and efficient synchronization are critical.

Distributed Databases

Systems such as Cassandra and DynamoDB use Merkle Trees during anti-entropy processes to detect and repair replica divergence efficiently.

Peer-to-peer Networks

Protocols such as BitTorrent and IPFS use Merkle Trees to verify the integrity of downloaded blocks from untrusted peers.

Nodes can verify each block incrementally using the Merkle root obtained from a trusted source.

Blockchain Systems

Blockchains such as Bitcoin and Ethereum organize transactions inside blocks using Merkle Trees.

The block header contains the Merkle root of all transactions, allowing lightweight clients to verify transaction inclusion without downloading the full block.

Version Control Systems

Systems like Git rely on hash-based structures related to Merkle Trees to ensure integrity and traceability of stored objects.

Certificate Transparency

Large-scale logging systems such as Certificate Transparency use Merkle Trees to provide cryptographic proofs that certificates have been publicly logged.

These systems can contain hundreds of millions or billions of leaves, making Merkle Trees essential for scalable verification.

Implementation

The concepts behind Merkle Trees are relatively simple, but implementing them in practice still requires handling several details carefully, such as how hashes are combined, how updates propagate through the tree, and how Merkle proofs are generated and verified. To illustrate these mechanics, I built a small Java implementation of a Merkle Tree that focuses on the core aspects of the data structure: building the tree, computing the root, generating proofs, and verifying membership. Nothing fancy, just enough code to see how the pieces fit together without summoning an entire distributed database. You can explore the implementation here:

Why Merkle Trees Matter

Merkle Trees have become a foundational tool in distributed system design because they enable:

- Efficient integrity verification of very large datasets

- Efficient replica synchronization

- Minimal communication overhead

- Cryptographic commitment to data

Even a single bit change in the dataset alters the Merkle root, making tampering immediately detectable. For large-scale systems where full dataset comparison is infeasible, Merkle Trees provide an elegant and scalable solution. And perhaps most importantly, they allow distributed systems to answer a very practical question:

“Are our replicas still the same?”

Without having to compare everything. Which, in distributed systems, is often the difference between a clever algorithm and a very expensive outage. Or, at the very least, the difference between a calm afternoon and an incident report.